This week’s seminar was devoted to listening to one of the lessons from the book (with matching CD) Critical Listening Skills For Audio Professionals, the object of which I presume is to refine our ability to focus our auditory perception to the high levels necessary to make successful audio engineering decisions. We examined variations in level at given frequencies, amongst other things and determined that changes in level are generally more noticeable at lower frequencies and louder (starting) levels. In speech as well as music, 2dB is (for most people) the smallest detectable change in level, which we discovered by listening to endless repeats of the same paragraph of text and the same extract of music. It would have been vastly more entertaining had the reader demonstrated this point by telling a dirty joke and playing fragments of say, The Legendary Stardust Cowboy but I appreciated the lesson nevertheless. It was also postulated that “random noise” can be defined as noise or sound which is constantly shifting in frequency and amplitude and we listened to examples of such with specified centre frequencies. The narrower the bandwidth around that centre frequency, the more “like” that frequency the noise becomes. Sadly, this puts paid to my claim that my bands are all based around random noise.

References

Everest, F. 2006, Critical Listening For Audio Professionals, Course Technology, Boston.

Whittington, S., Concepts of Music (Aural) Seminar (personal notes) 31/07/08

Monday, August 4, 2008

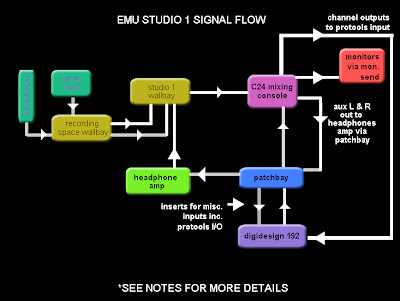

SES WEEK 1 STUDIO 1 SIGNAL FLOW

This week’s exercise involved describing the signal flow from a microphone or DI’d signal in the recording space to Pro Tools in Studio 1. To achieve this I teamed up with Sam Deere and we obtained the necessary information from the EMU (Studio) Guide, Dave Grice during the week 1 seminar and a physical inspection of Studio 1. The assignment also required a description of the talkback, headphones and studio monitors signal flows all of which should be covered by the above diagram. For extra explanatory notes, see below.

Notes:

* Patchbay inputs to Pro Tools are normalised (ie: no patchbay plugs required)

* Pro Tools outputs to patchbay are not normalised and need to be patched (Pro Tools 1 and Pro Tools 2 outputs to C24 Pro Tools source 1 and C24 Pro Tools source 2 inputs)

* For headphones mix, select monitor> auxiliary on C24

* Talkback via desk microphone, automatically patched to headphones (aux) send

* To patch a microphone or DI input via the Avalon pre-amp, the signal path between the microphone inputs at the Studio 1 wallbay is broken by inserting the dedicated Avalon input (mic signal) lead into the signal path or (for a line level signal), plug the instrument directly into the socket on the front panel of the Avalon itself – either of these options will then need to be patched on the patchbay from Avalon Out to ProTools In

References:

D.Grice, EMU Studio 1 Signal Flow, Sound Engineering Studio Lecture, 29/07/08

Adelaide University, Electronic Music Unit, Resources, Guides, Spaces, Studio 1

http://emu.adelaide.edu.au/resources/guides/spaces/studio.1.html, viewed 03/08/08

Tuesday, July 29, 2008

MTF - WEEK 1 - TOM DOWD DVD

The DVD “The Language of Music” presented a fascinating journey through the history of music recording technology from the viewpoint of one of the most important figures in this field, Tom Dowd. Dowd’s tenure as a recording engineer and producer seems to have spanned over 50 years during which time music recording underwent the change from direct mastering (in the recording studio) to a rotating cylindrical disc, to ¼” mono tape recording, to 8 track multi-track recording destined for stereo playback and beyond. It’s interesting to note that the problems encountered by these very early direct-mastered recordings are still extant today when recording for vinyl release, ie: that these recordings were cut at 88 grooves per inch, which could be increased up to 160 grooves per inch but only by compromising the overall level (the result would be quieter) and risking the playback needle jumping out of the groove. A band that I recorded last semester at the EMU wants to release their recording on vinyl and when we made enquiries at Australia’s only vinyl pressing plant (Zenith Records) we found that the same principle still applies, ie: that the longer the recording, the closer the groove spacing, the quieter the overall level will be, with risk of needle jumping.

References

Anon, Preparing Your Master, Zenith Records

http://www.zenithrecords.org/masters.html, viewed 03/08/08

C. Haines, Seminar, Music Technology Forum, 29/07/08

Various, The Language of Music (DVD), 2003

(Mark Moorman)

References

Anon, Preparing Your Master, Zenith Records

http://www.zenithrecords.org/masters.html, viewed 03/08/08

C. Haines, Seminar, Music Technology Forum, 29/07/08

Various, The Language of Music (DVD), 2003

(Mark Moorman)

Friday, June 27, 2008

SOUND ENGINEERING (STUDIO) A - STUDIO PROJECT

The band I chose to record for this project is local 3 piece "Glamville". Their line-up consists of drummer, guitarist/vocalist and bass player/ vocalist and their musical style could be described as jazz-punk. I knew Glamville would be a difficult recording subject as their songs are very loosely structured and the element of spontaneity is paramount in their musical ethos, rendering it difficult to record one instrument at a time to maintain absolute production cleanliness. In the studio, I compromised with the band to the extent where I managed to separate them physically by placing the guitarist in the dead room and the drummer and bass player in the recording space with baffles strategically positioned. Naturally I still had trouble with spill but the band was happy to be able to lay the bed tracks down together simultaneously. The bed tracks were laid in 2 x 4 hour sessions with another 4 hour session allocated to overdubbing extra guitar parts, vocals and a last-minute piano track. I then spent around 12 hours mixing down, cleaning up and generally earning my keep as a putative sound engineer. Click to below to hear the result.

MP3 MIXDOWN - GLAMVILLE - HERMETIC CO-ORDINATES - CLICK HERE

MP3 MIXDOWN - GLAMVILLE - INSOLUBLE SOLUTION - CLICK HERE

PROJECT PRODUCTION AND RECORDING NOTES PDF - CLICK HERE

Labels:

glamville,

punk-jazz,

recording,

sound production,

tion

Wednesday, June 25, 2008

AUDIO STUDIES - CREATIVE PROJECT

Program Note

Emu Challenge

Dorothy Pawlowski

03:14

EMU CHALLENGE MIXDOWN MP3 - CLICK HERE

Whenever I think of video game music, I think of the music of the Commodore 64 and (later) the Super Nintendo gaming consoles of the 1980’s. By today’s standards, these machines were capable of producing only low resolution audio, often played back through a small speaker. The result was usually brittle, noisy and trashy.

When writing this, my own hypothetical video game music ”Emu Challenge”, I deliberately attempted to emulate the sound of early digital audio by including “noisy” percussion samples and copying the vibrato-heavy, string-like synthesizer sounds which were typically used for melody instruments at the time.

Emu Challenge

The hero of this video game is an emu who faces challenges within three main gaming zones namely, The Adventure Zone, The Spooky Zone and The Fun Zone. All of the sequencing for the final work was carried out in Pro Tools LE (session bit depth 24 and sample rate 48kHz).

The percussion samples for the work were obtained either from the Garage Band library or manufactured using the ES1 and FM synthesizers in Logic Pro). I obtained sound effects from the web as well as recording voice tags which I felt would emphasise the “game” flavour of the music, editing and normalising some of the samples in Peak LE as necessary. The melodic components were composed in Logic Pro using the matrix editor and ES1 synthesizer, which I found most appropriate to the style of music. I exported the melodies as 24 bit wav audio files from Logic and imported them into the Pro Tools region list, converting the sample rate to match the Pro Tools session sample rate. The Spooky Zone melodies utilised the “swing” function of Logic, as I wished this section to have a less rigid feel to the meter.

After manufacturing, recording and collecting all of this source material, I arranged it accordingly in Pro Tools, mostly using grid mode, and performed mixing, panning, automation and organisational operations as well as applying plug-ins. Although normalised, there were still problems with varying levels between the vocal effects tags and there were no tracks left over to allocate each tag a track of its own (as I had already used all of the tracks available in Pro Tools LE at this sample rate and bit depth), so volume automation was particularly necessary on the two “FX” tracks.

The Adventure Zone

Meter: 4/4

Tempo: 160 bpm

Key: C minor

(In this section I have departed from my original idea (as per proposal) of using C major as the key because I found when I began writing the melody that the feeling of a strictly major key was not “dangerous” enough.)

The Spooky Zone

Meter: 4/4

Tempo: 160 bpm

Key: G minor

The Fun Zone

Meter: 6/8

Tempo: 180 bpm

Key: C major

Production Credits

Voice Tags: Captain I (appears by permission of Sleazy Studios)

Emu Challenge

Dorothy Pawlowski

03:14

EMU CHALLENGE MIXDOWN MP3 - CLICK HERE

Whenever I think of video game music, I think of the music of the Commodore 64 and (later) the Super Nintendo gaming consoles of the 1980’s. By today’s standards, these machines were capable of producing only low resolution audio, often played back through a small speaker. The result was usually brittle, noisy and trashy.

When writing this, my own hypothetical video game music ”Emu Challenge”, I deliberately attempted to emulate the sound of early digital audio by including “noisy” percussion samples and copying the vibrato-heavy, string-like synthesizer sounds which were typically used for melody instruments at the time.

Emu Challenge

The hero of this video game is an emu who faces challenges within three main gaming zones namely, The Adventure Zone, The Spooky Zone and The Fun Zone. All of the sequencing for the final work was carried out in Pro Tools LE (session bit depth 24 and sample rate 48kHz).

The percussion samples for the work were obtained either from the Garage Band library or manufactured using the ES1 and FM synthesizers in Logic Pro). I obtained sound effects from the web as well as recording voice tags which I felt would emphasise the “game” flavour of the music, editing and normalising some of the samples in Peak LE as necessary. The melodic components were composed in Logic Pro using the matrix editor and ES1 synthesizer, which I found most appropriate to the style of music. I exported the melodies as 24 bit wav audio files from Logic and imported them into the Pro Tools region list, converting the sample rate to match the Pro Tools session sample rate. The Spooky Zone melodies utilised the “swing” function of Logic, as I wished this section to have a less rigid feel to the meter.

After manufacturing, recording and collecting all of this source material, I arranged it accordingly in Pro Tools, mostly using grid mode, and performed mixing, panning, automation and organisational operations as well as applying plug-ins. Although normalised, there were still problems with varying levels between the vocal effects tags and there were no tracks left over to allocate each tag a track of its own (as I had already used all of the tracks available in Pro Tools LE at this sample rate and bit depth), so volume automation was particularly necessary on the two “FX” tracks.

The Adventure Zone

Meter: 4/4

Tempo: 160 bpm

Key: C minor

(In this section I have departed from my original idea (as per proposal) of using C major as the key because I found when I began writing the melody that the feeling of a strictly major key was not “dangerous” enough.)

The Spooky Zone

Meter: 4/4

Tempo: 160 bpm

Key: G minor

The Fun Zone

Meter: 6/8

Tempo: 180 bpm

Key: C major

Production Credits

Voice Tags: Captain I (appears by permission of Sleazy Studios)

Labels:

audio,

Captain I,

Logic Pro,

Pro Tools,

sequencing,

Sleazy Studios,

video game music

Tuesday, June 17, 2008

MIDI STUDIES A - CREATIVE PROJECT

Other Girlfriend – An original song

By Dorothy Pawlowski

03:48

The concept behind this rendering of Other Girlfriend was to take a simple song that I wrote for a punk-pop band, and re-work it into a suitable format for the dance floor.

I began by writing the bass line using a MIDI track and a virtual software synth (the ES1), as the simple bass line determines the overall structure of the entire song. In order to rewrite the song as a dance piece in the context of a MIDI file, it was necessary for me to write all of the drum beats completely from scratch. To do this I used the Ultrabeat function of Logic Pro, which is a multi-timbral virtual drum machine that occupies only one MIDI instrument track within Logic. I wrote several different drum beats, modifying the preset drum samples to suit my needs, ie: changing them to “fat” sounds with a lot of distortion. When I had the drum beats completed, I arranged them as required in the arrange window and added two more virtual instrument MIDI tracks for melody parts, one with Garage Bands Morph Synthesizer.

At this point I wished to add some audio tracks in the form of vocals. I decided to do this in Studio 2 but had some difficulty getting an audio signal into Logic there. In order to overcome this, I recorded the vocal tracks in Pro Tools and later synchronised them back into Logic in the Audio Lab. After arranging the main vocal tracks appropriately, I chopped smaller samples out of them and used these for effect in the “middle 32, breakdown” section.

I then established a Rewire track using Reason as the slave, feeding it back into Logic on two audio channels and wrote another MIDI track with “effects” type sounds to control this.

Finally, I applied plug-ins to channels as I felt necessary and automated these as well as volume and panning, particularly on the vocal channels which had level discrepancy issues which I felt could be corrected in this way.

OTHER GIRLFRIEND MIDI FILE

OTHER GIRLFRIEND MP3 MIXDOWN

By Dorothy Pawlowski

03:48

The concept behind this rendering of Other Girlfriend was to take a simple song that I wrote for a punk-pop band, and re-work it into a suitable format for the dance floor.

I began by writing the bass line using a MIDI track and a virtual software synth (the ES1), as the simple bass line determines the overall structure of the entire song. In order to rewrite the song as a dance piece in the context of a MIDI file, it was necessary for me to write all of the drum beats completely from scratch. To do this I used the Ultrabeat function of Logic Pro, which is a multi-timbral virtual drum machine that occupies only one MIDI instrument track within Logic. I wrote several different drum beats, modifying the preset drum samples to suit my needs, ie: changing them to “fat” sounds with a lot of distortion. When I had the drum beats completed, I arranged them as required in the arrange window and added two more virtual instrument MIDI tracks for melody parts, one with Garage Bands Morph Synthesizer.

At this point I wished to add some audio tracks in the form of vocals. I decided to do this in Studio 2 but had some difficulty getting an audio signal into Logic there. In order to overcome this, I recorded the vocal tracks in Pro Tools and later synchronised them back into Logic in the Audio Lab. After arranging the main vocal tracks appropriately, I chopped smaller samples out of them and used these for effect in the “middle 32, breakdown” section.

I then established a Rewire track using Reason as the slave, feeding it back into Logic on two audio channels and wrote another MIDI track with “effects” type sounds to control this.

Finally, I applied plug-ins to channels as I felt necessary and automated these as well as volume and panning, particularly on the vocal channels which had level discrepancy issues which I felt could be corrected in this way.

OTHER GIRLFRIEND MIDI FILE

OTHER GIRLFRIEND MP3 MIXDOWN

Tuesday, June 10, 2008

CONCEPTS OF MUSIC - WEEK 12 - PITCH PERCEPTION

Music is the audible manifestation of maths. Onya Pythagoras. OK, I am officially lost and if maths is the basis of all reality then I am doubly stuffed as neither maths nor reality is my best subject and I reckon I just might have my head up my hem hem. Anyway, Pyth apparently surmised that each of the 7 planets in our solar system would produce a musical note dependent upon its distance from the “centre” (being Earth in this hypothesis): like the different lengths of resonating strings, for example. This was called “Musica Mundana” or as we would say "Music of the Spheres" and would be indiscernible to our presumably too-worldly ears. What a beautiful idea. There IS a harmonic and numeric relationship between all things from the greatest in scale to the smallest; it has been well-documented in any number of mystic publications and websites but it still has credence for all that. Some people become obsessed with these relationships and are consequently and unkindly regarded by our society as cranks. I think it is well to notice the numeric underpinning to cosmic structure but do not let the the desire to understand it dominate your life, for what is... is.

Here is a source of entertaining misinformation about Pythagoras

References:

Stephen Whittington, Lecture, Concepts of Music (Aural), 05/06/08

John Boyd-Brent, Harmony and Proportion, http://www.aboutscotland.co.uk/harmony/prop.html

Viewed 10/06/08

Here is a source of entertaining misinformation about Pythagoras

References:

Stephen Whittington, Lecture, Concepts of Music (Aural), 05/06/08

John Boyd-Brent, Harmony and Proportion, http://www.aboutscotland.co.uk/harmony/prop.html

Viewed 10/06/08

Labels:

mathematics,

music,

Pythagoras

Subscribe to:

Posts (Atom)